More offline professional development if you are working from home or sharing devices!

Now that you have a vetted best of the best storytime activity list, let’s do some storytime planning with it.

You may already be a proponent of no-theme storytimes or flow storytimes, but if not, today’s exercise is to give this strategy a try.

Why?

Because it’s a way of prioritizing our best content, the activities and components that are most strongly aligned with our goals, over the books and activities that we reach for because they match our theme. I’m personally agnostic on theme or non-themed storytimes, but it should be clear by now I am 100% a proponent of choosing materials for the right reasons. Testing out a non-themed or flow storytime is one way we can work on putting our priorities into practice.

First, read this post from the amazing Jbrary: Storytime Themes vs. Storytime Flow to give yourself an idea of what a storytime might look like when it’s not centered around a theme.

Then, pick a place to start–it could be one of your activities from your Top 40 List, or it could be a favorite book. (I’ve found that flow storytimes are a great way to make sure I have an inclusive book in my storytimes: instead of starting with a theme and looking for an inclusive title that “fits,” I start with an amazing book and go from there.)

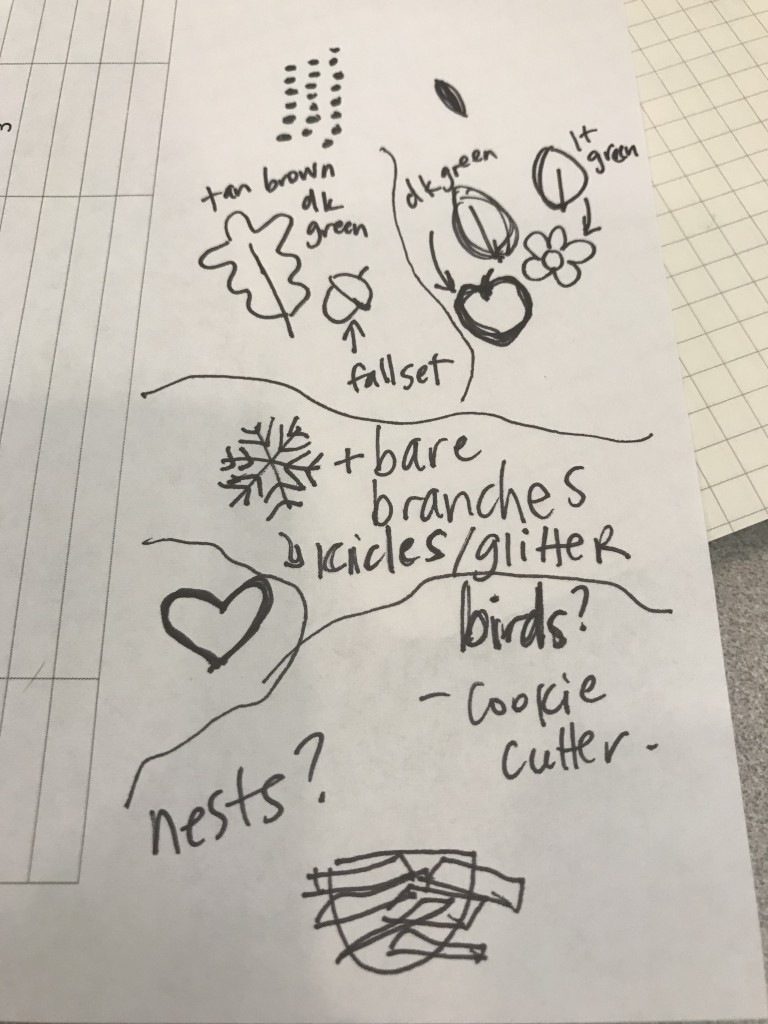

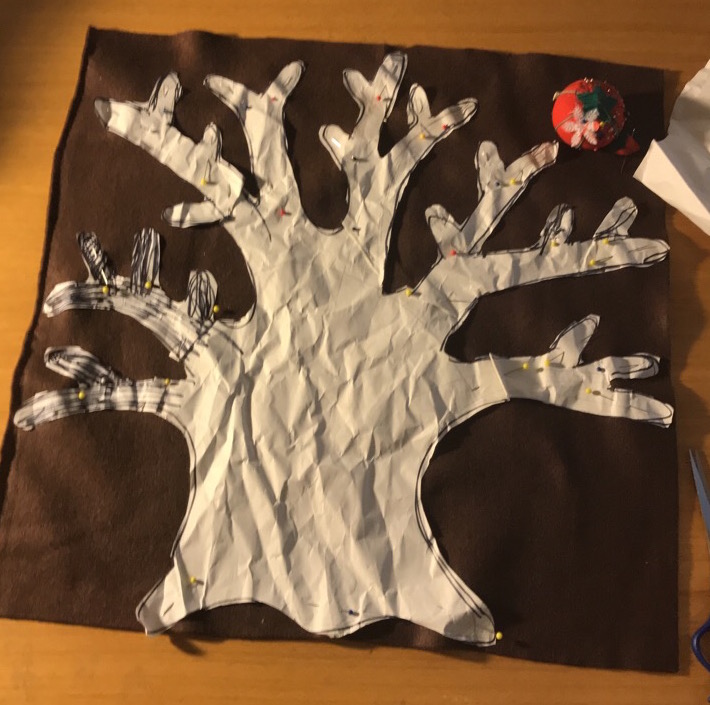

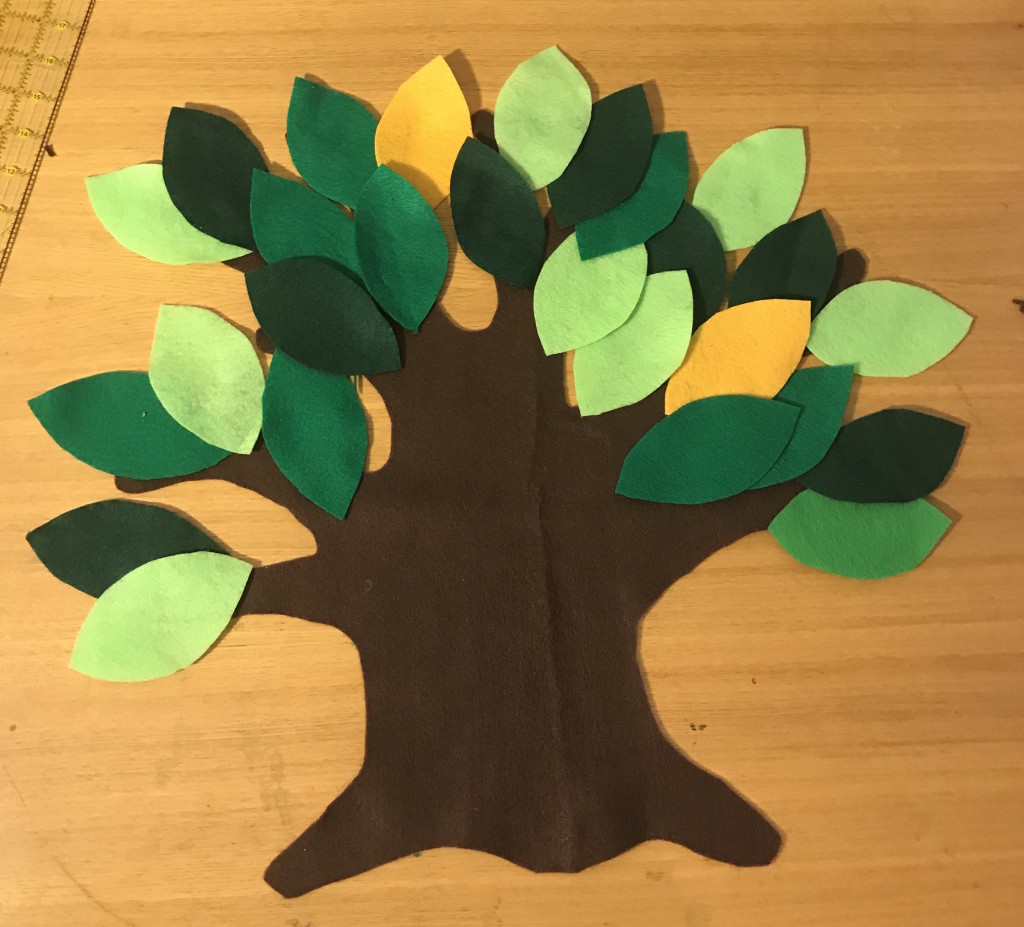

Work forwards and backwards linking activities and books to your storytime flow until you have a plan. Refer to your Top 40 List often! Some connections will leap out at you, but don’t stop there. What are ways you can adapt items from your list to fit your flow? (As I mentioned last time, I really like to use Green Grass Grew All Around–and I’ll just change up what’s in the tree depending on the connection I’m trying to make. Maybe there will be an apple on the branch, or maybe an owl, or a swing.)

Don’t forget to think about your transition statements and interstitial messaging!

That’s it, that’s the exercise! How does it feel to let go of that theme as an organizing principle? I love knowing that I’m choosing activities and books that really speak to my storytime goals. I still routinely use themes as a filter, but I feel like I’m sharing a lot less “filler” material when I think about flow and start with my Top 40 list instead of a google search for theme related ideas.

Bonus: Share your plan with someone!

Keep going: Do another storytime flow plan! Try a partial flow, or plan a themed storytime but expand your keywords as you choose materials (eg, not just bears, but–honey, berries, hibernating, porridge, trees, fish…)

Apply your learning: write out your plan and add a phrase or a sentence reminding yourself how each storytime element or component or activity helps you meet your storytime goals. Which goals are the easiest to support? Which are harder? What can you change to more fully support all of your goals?

Shout out: To Jbrary for the link! <3 Who else has a storytime flow blog post to tell us about?

.jpg)